Supermicro AS-5014A-TT Internal Hardware Overview

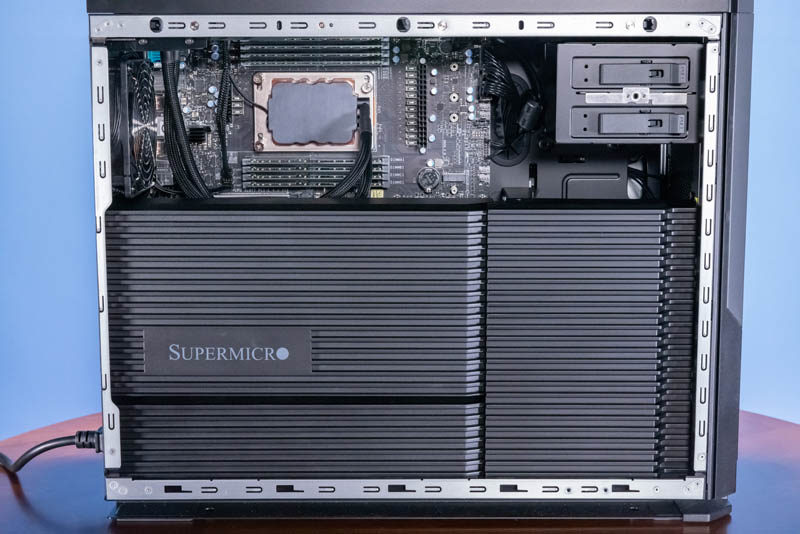

The AS-5014A-TT came with a clear side panel that made reflections in photos, but here is the side with that panel off. One can see the CPU and memory, along with the mounting points for 5.25″ hardware, but there is little else. Instead, Supermicro has an elaborate-looking shroud system hiding components underneath.

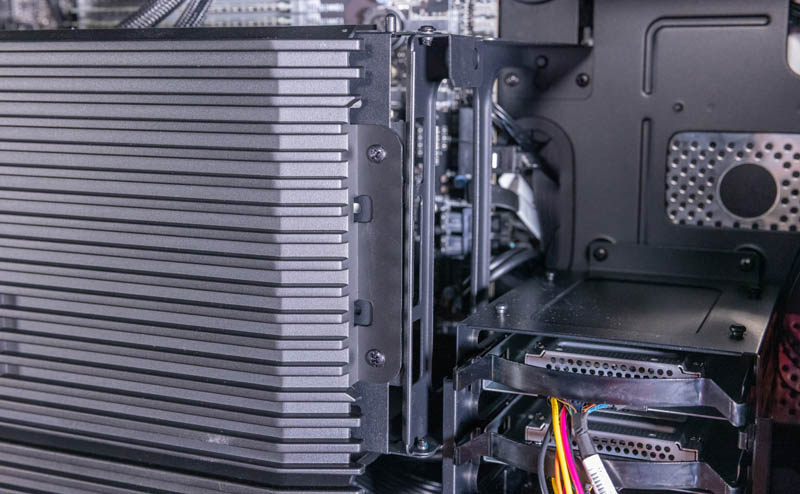

The right shroud pulls out fairly easily via a locking tab, but the larger panel requires two screws. We wish Supermicro makes this screw-less in future versions.

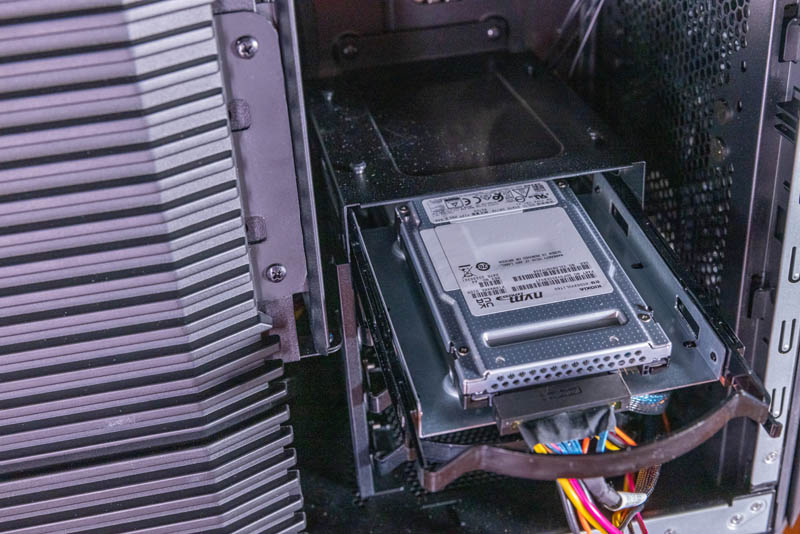

Under that right shroud, we have our 3.5″ storage bays. These 3.5″ bays can also house 2.5″ drives, even NVMe drives like the Kioxia CD6 drives we have here using mounting adapters. These are not hot-swap bays. That is pretty common in this class of workstation, especially as SSDs have become so much more reliable than their rotating predecessors.

Removing the larger left shroud, we get a GPU or expansion card support bracket. This is used to secure large and heavy PCIe devices during shipping and also to prevent sag through gravity’s gradual bending of GPU PCB over time.

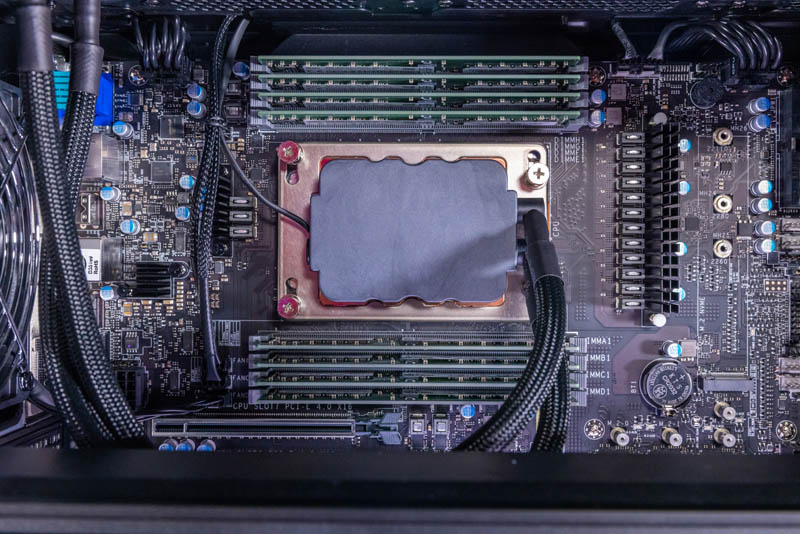

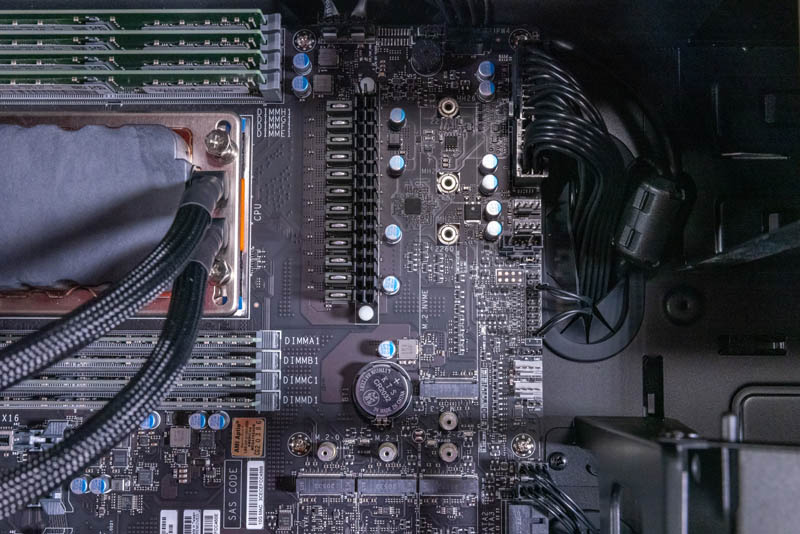

The CPU itself is very interesting. This is an AMD Ryzen Threadripper Pro workstation, so in this, we have the Threadripper Pro 5995WX. Cooling this, we have an AIO liquid cooling loop. As we will see, that seems to perform better than air coolers used in other brands’ workstations.

Here we get 8x DDR4-3200 slots. In our system, we are using 32GB RDIMMs for a total of 256GB of memory. That is likely to be on the lower-end of systems using the Threadripper Pro 5995WX 64-core CPU.

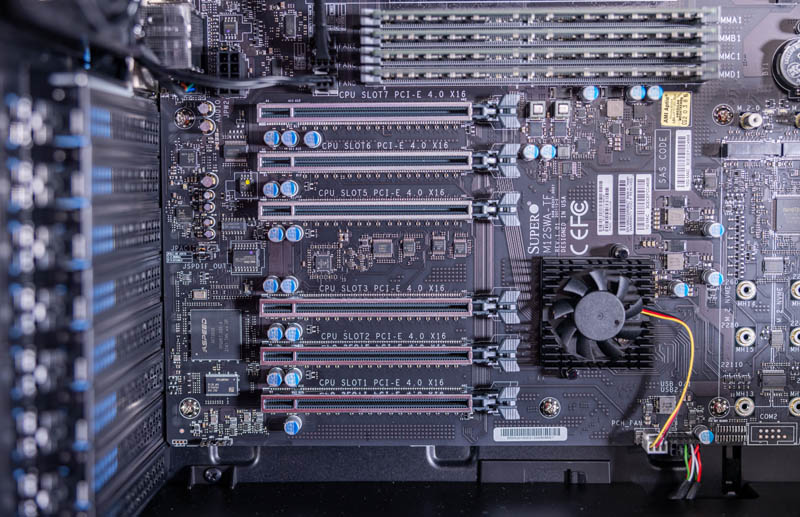

The expansion card slot situation is really interesting. We get a total of six slots. All are PCIe Gen4 x16 card slots. Both the Slot5 as well as Slot1 have a second I/O plate mounting at the rear for mounting double-width cards. Using those slots for double-width cards means that all six slots can be used simultaneously. That is the power of the large chassis and AMD Ryzen Threadripper Pro.

Next to the CPU, there is a M.2 slot, but this is not the only M.2 storage option on the motherboard.

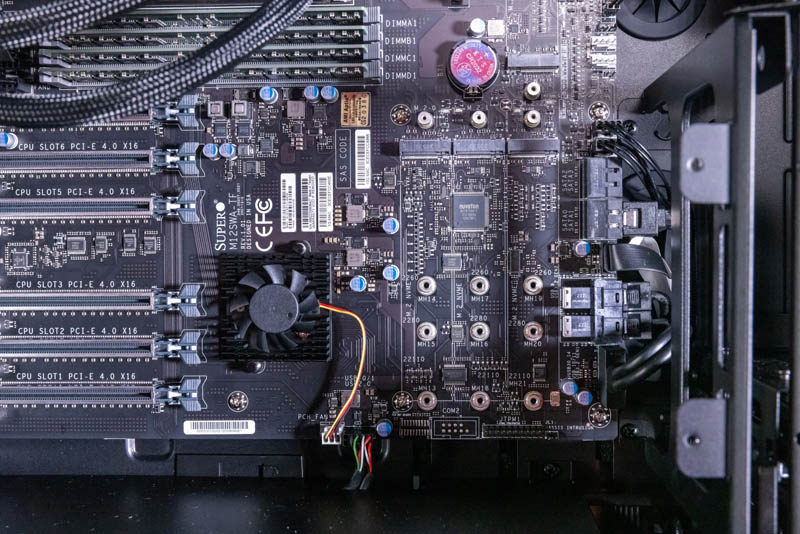

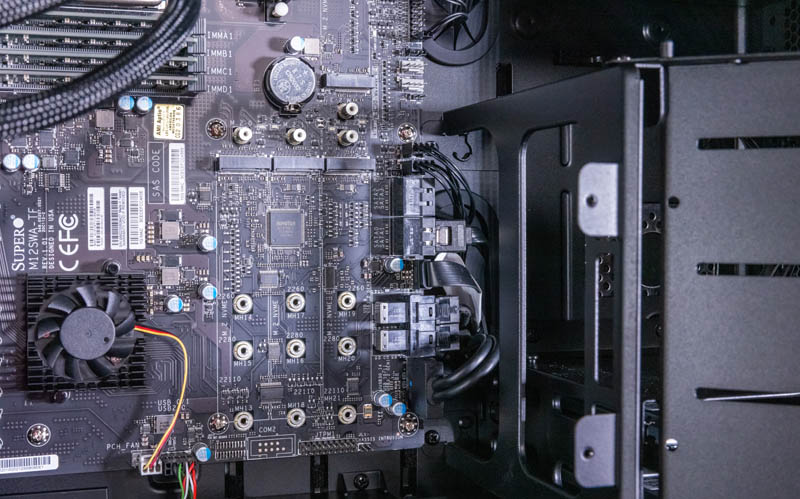

There are three more slots. One can have 6x PCIe Gen4 x16 slots and still have additional room for 4x M.2 storage.

The Kioxia SSDs we have in the system use the U.2 ports next to the M.2 ports. We will have the block diagram so you can see how all of this is wired, given we have a lot of I/O here.

SATA is really being phased out of higher-end workstations. 8-10 years ago, a workstation like this might have 16-20 (or more) ports for SATA drives. Now, we have four. Two are used for those front 2.5″ bays.

Next, let us get to the system topology.

Nice. Good PCIe slot layout, accomodate two GPU without blocking other slots. Some ppl (me for example) cannot have too many GPUs in a machine, it’d be great if a revision of the case had off board physical mounting for a couple more GPU to be connected with riser cables.

Nevertheless compared to, say, certain other mfr’s two slot TR Pro offerings this is brilliant.

That’s a sharp looking workstation case.

Would like to see these real Chess bench nodes/sec. numbers!

Nice reviews as always..

Yes – nice workstation – I just bought one. The only thing I really miss (so far) is the PMBUS for the power supply – it doesn’t support this. So, no monitoring power usage via IPMI. Which I do miss.

Also, had to move a NVIDIA 3060 grade consumer grade card around in the PCIE slots until I found the one where it stopped acting “flaky” – of course this could be the card itself.

One last gripe, SuperMicro store does NOT carry the conversion kit to have this rack mounted, you have to purchase it (if you can find it) at other outlets.

Overall, I do give it a thumbs up :-)

these machines are onerous overpriced anachronisms from the get go due to pricing and marketing decisions – they will only be around for a little while due to these factors – the future of hedt is in a lull but the lull won’t last long – think arm and tachyum options plus clustering – you can get many machines for the same price in diy mode and surpass overall performance – this is not innovative or clever but more like unclear. final answer

@opensourceservers most people or rather companies running these have production workloads where expert salary and software licences are the main cost factors. Even if such a system is changed every ~2-3 years it will be <= 10% of the total costs related to that employee. What you are talking about with arm clusters is a totally different type of scenario.

These would probably be monster scale-up mixed usage database servers. I’d love to see a set of SQL benchmarks (but the MS SQL enterprise license cost of such a system would be scary). If your workload doesn’t shard and parallelize well, a classic SQL server scale up system can perform much better at a lower cost, and these can have huge RAM and extremely fast storage direct attached, with even more storage as NMVEoF with DPUs

@David Artz. Can you point me towards a suitable rack mount kit vendor please.

Not a fan of this new SuperChassis GS7A-2000B with its LED lighting bar.

I much prefer the older designs: CSE-743AC-1K26B-SQ or CSE-745BAC-R1K23B-SQ, CSE-745BAC-R1K23B or 11 slot CSE-747BTQ-R2K04B, all in 4U Form Factor.