PNY GeForce RTX 2080 Ti Blower Deep Learning Benchmarks

As we continue to innovate on our review format, we are now adding deep learning benchmarks. In future reviews, we will add more results to this data set. At this point, we have a fairly nice data set to work with.

ResNet-50 Inferencing in TensorRT using Tensor Cores

ImageNet is an image classification database launched in 2007 designed for use in visual object recognition research. Organized by the WordNet hierarchy, hundreds of image examples represent each node (or category of specific nouns).

In our benchmarks for Inferencing, a ResNet50 Model trained in Caffe will be run using the command line as follows.

nvidia-docker run --shm-size=1g --ipc=host --ulimit memlock=-1 --ulimit stack=67108864 --rm -v ~/Downloads/models/:/models -w /opt/tensorrt/bin nvcr.io/nvidia/tensorrt:18.11-py3 giexec --deploy=/models/ResNet-50-deploy.prototxt --model=/models/ResNet-50-model.caffemodel --output=prob --batch=16 --iterations=500 --fp16

Options are:

–deploy: Path to the Caffe deploy (.prototxt) file used for training the model

–model: Path to the model (.caffemodel)

–output: Output blob name

–batch: Batch size to use for inferencing

–iterations: The number of iterations to run

–int8: Use INT8 precision

–fp16: Use FP16 precision (for Volta or Turing GPUs), no specification will equal FP32

We can change the batch size to 16, 32, 64, 128 and precision to INT8, FP16, and FP32.

The results are in inference latency (in seconds.) If we take the batch size / Latency, that will equal the Throughput (images/sec) which we plot on our charts.

We also found that this benchmark does not use two GPU’s; it only runs on a single GPU. You can, however, run different instances on each GPU using commands like.

```NV_GPUS=0 nvidia-docker run ... &

NV_GPUS=1 nvidia-docker run ... &```

With these commands, a user can scale workloads across many GPU’s. Our graphs show combined totals.

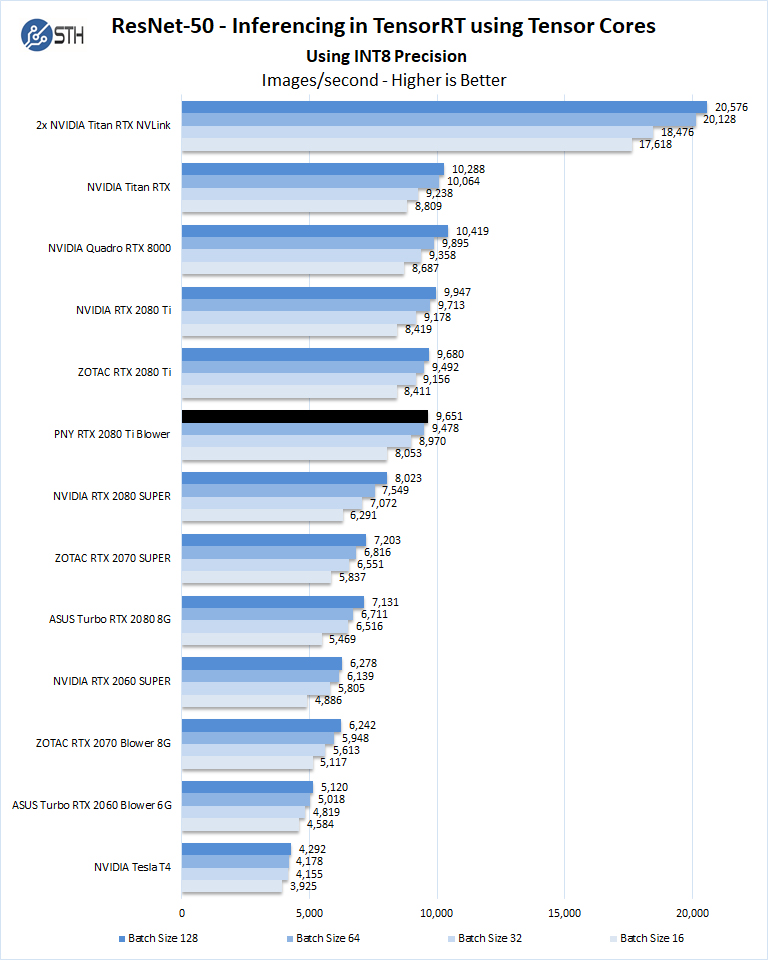

We start with Turing’s new INT8 mode which is one of the benefits of using the NVIDIA RTX cards.

Using the precision of INT8 is by far the fastest inferencing method if at all possible converting code to INT8 will yield faster runs. Additional memory allows for larger batch sizes which help lead to faster runs. Using INT8 this is less of an issue, but we see it more on other tests.

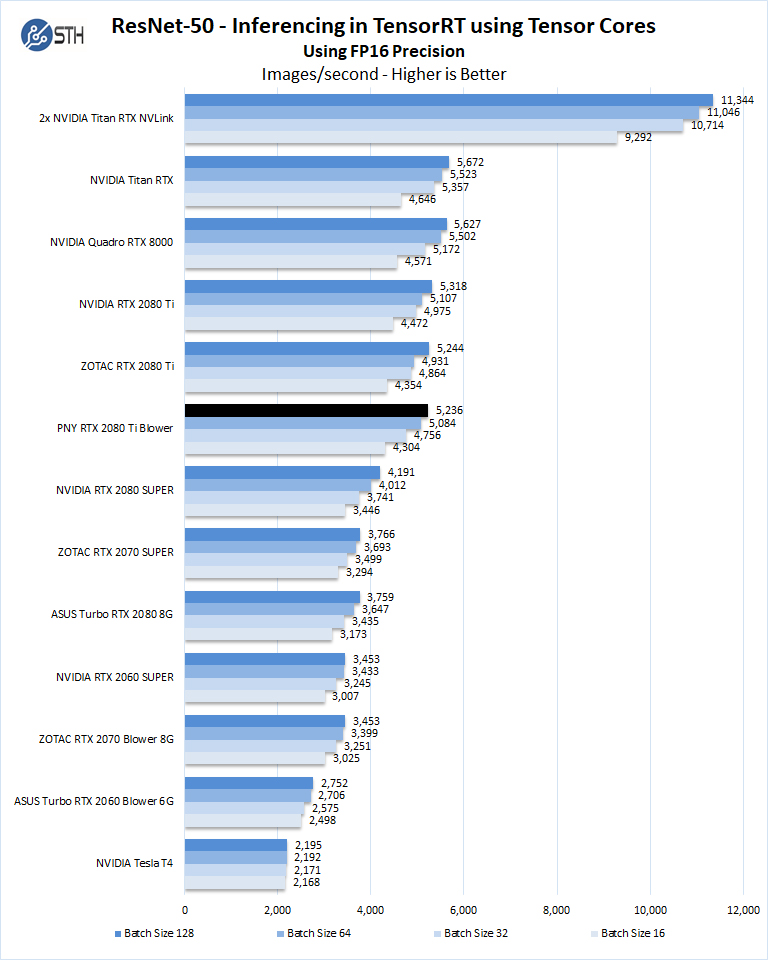

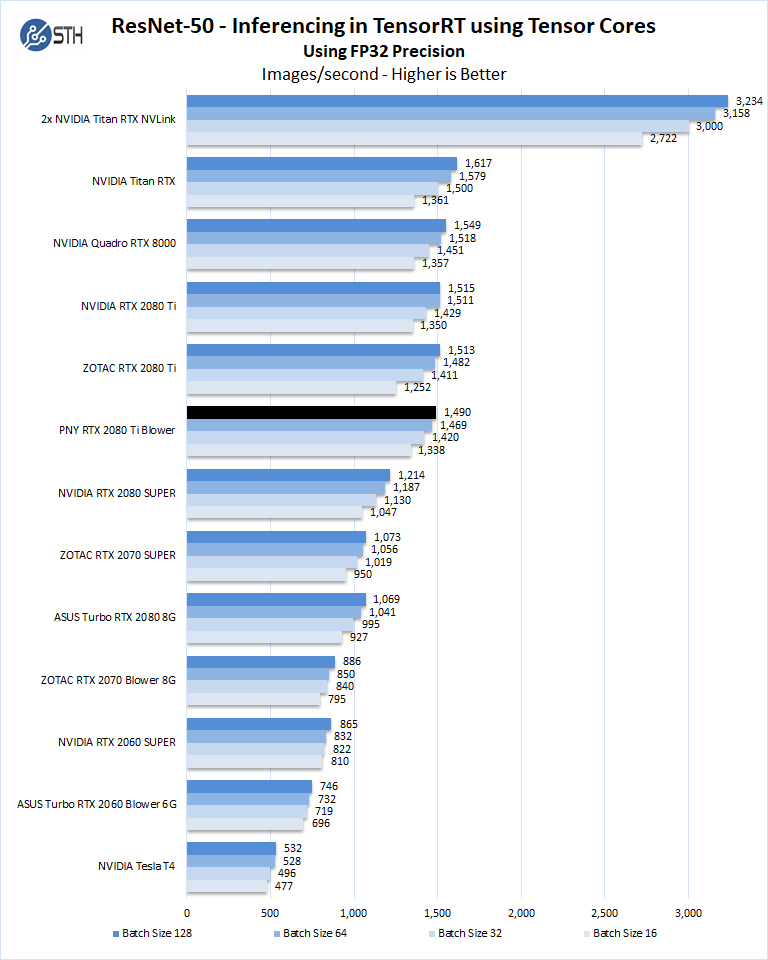

Let us look at FP16 and FP32 results.

Overall, we are back in the 1-2% variance range between the Founders Edition, inexpensive dual-fan cooler, and this blower-style cooler GPU.

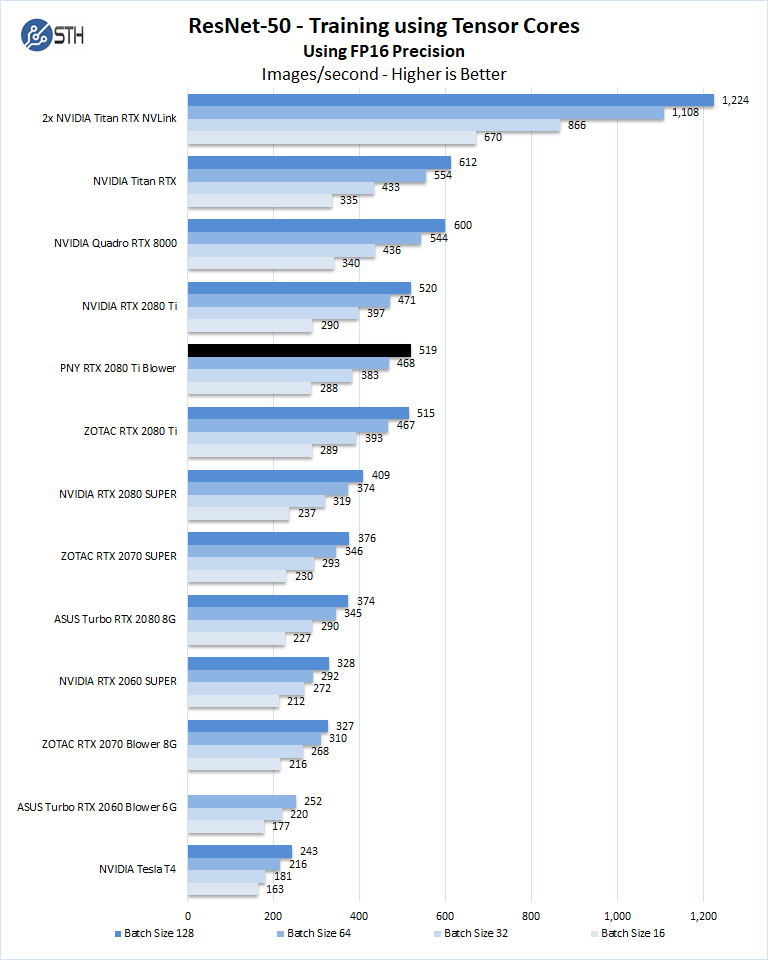

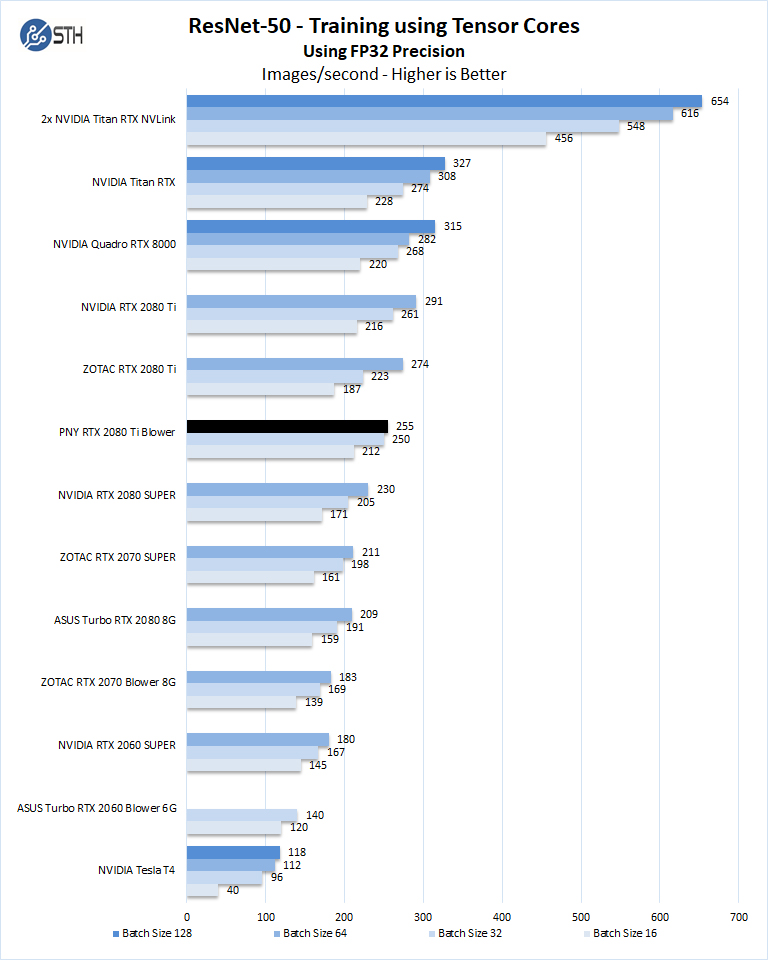

Training with ResNet-50 using Tensorflow

We also wanted to train the venerable ResNet-50 using Tensorflow. During training the neural network is learning features of images, (e.g. objects, animals, etc.) and determining what features are important. Periodically (every 1000 iterations), the neural network will test itself against the test set to determine training loss, which affects the accuracy of training the network. Accuracy can be increased through repetition (or running a higher number of epochs.)

The command line we will use is:

nvidia-docker run --shm-size=1g --ipc=host --ulimit memlock=-1 --ulimit stack=67108864 -v ~/Downloads/imagenet12tf:/imagenet --rm -w /workspace/nvidia-examples/cnn/ nvcr.io/nvidia/tensorflow:18.11-py3 python resnet.py --data_dir=/imagenet --layers=50 --batch_size=128 --iter_unit=batch --num_iter=500 --display_every=20 --precision=fp16

Parameters for resnet.py:

–layers: The number of neural network layers to use, i.e. 50.

–batch_size or -b: The number of ImageNet sample images to use for training the network per iteration. Increasing the batch size will typically increase training performance.

–iter_unit or -u: Specify whether to run batches or epochs.

–num_iter or -i: The number of batches or iterations to run, i.e. 500.

–display_every: How frequently training performance will be displayed, i.e. every 20 batches.

–precision: Specify FP32 or FP16 precision, which also enables TensorCore math for Volta and Turing GPUs.

While this script TensorFlow cannot specify individual GPUs to use, they can be specified by

setting export CUDA_VISIBLE_DEVICES= separated by commas (i.e. 0,1,2,3) within the Docker container workspace.

We will run batch sizes of 16, 32, 64, 128 and change from FP16 to FP32. Our graphs show combined totals.

As you can see, performance at the 128 batch size is not possible using FP32 because it does not have enough video memory. Still, for those who need a performance bump over the RTX 2080 SUPER, this is a solid option.

Next, we are going to look at the PNY RTX 2080 Ti Blower power and temperature tests and then give our final words.

I wouldn’t touch anything from PNY with a 10 foot pole. Everything I’ve bought from them has died prematurely.

We rack 8 of the Supermicro 10 GPU servers adding about 3 racks a quarter. That isn’t much, but it’s 240 + 4 spare GPUs per quarter. We’ve been doing them since the 1080 Ti days. Our server reseller gets us good deals on other brands but says PNY doesn’t want the business as much so we get other brands.

I’m gonna be honest here. That cooler looks like they’re just using something cheap. I’d like to see PNY actually design a nice cooler like the old NVIDIA FE cards had with vapor chamber and a metal housing. Maybe if they’d do that they could keep clock speeds higher or even push them and have a better solution for dense compute like you’re saying.

If they’re not doing that, then its too easy to buy Zotac for GPU server and workstation

The octane benchmark is probably using a scene that doesn’t fit in 11GB of VRAM, hence the large difference between high VRAM cards. It doesn’t say much about the card’s actual performance since it is heavily bottlenecked by out-of-core memory accessing.

@IndustrialAIAdmin What brands and models do you run in such environment?