Kioxia 3.2TB Basic Performance

For this, we are going to run through a number of workloads just to see how the SSD performs. We also like to provide some easy desktop tool screenshots so you can see the results compared to other drives quickly and easily.

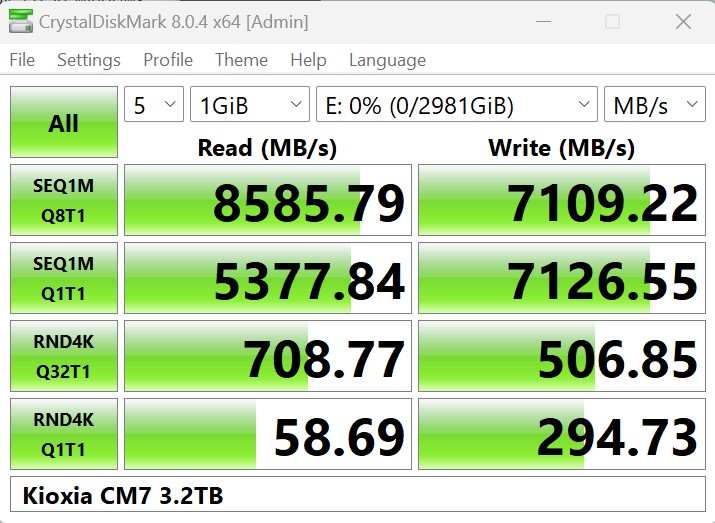

CrystalDiskMark 8.0.4 x64

CrystalDiskMark is used as a basic starting point for benchmarks as it is something commonly run by end-users as a sanity check. Here is the smaller 1GB test size:

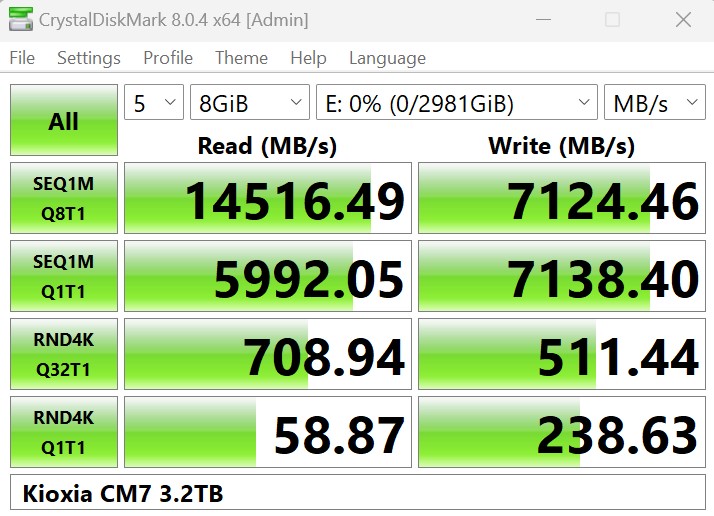

Here is the larger 8GB test size:

This is probably one of the most stark points of this drive. Over 14GB/s sequential read is crazy. The write numbers are a lot more mundane, but this is a fast SSD when it hits its stride.

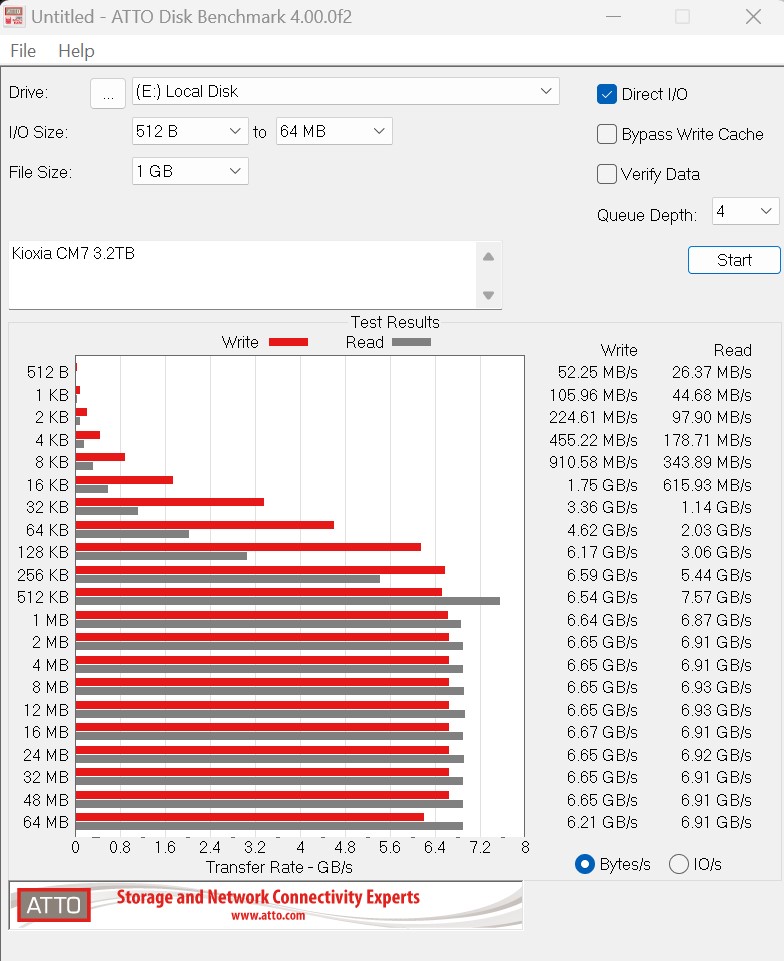

ATTO Disk Benchmark

The ATTO Disk Benchmark has been a staple of drive sequential performance testing for years. ATTO was tested at both 256MB and 8GB file sizes.

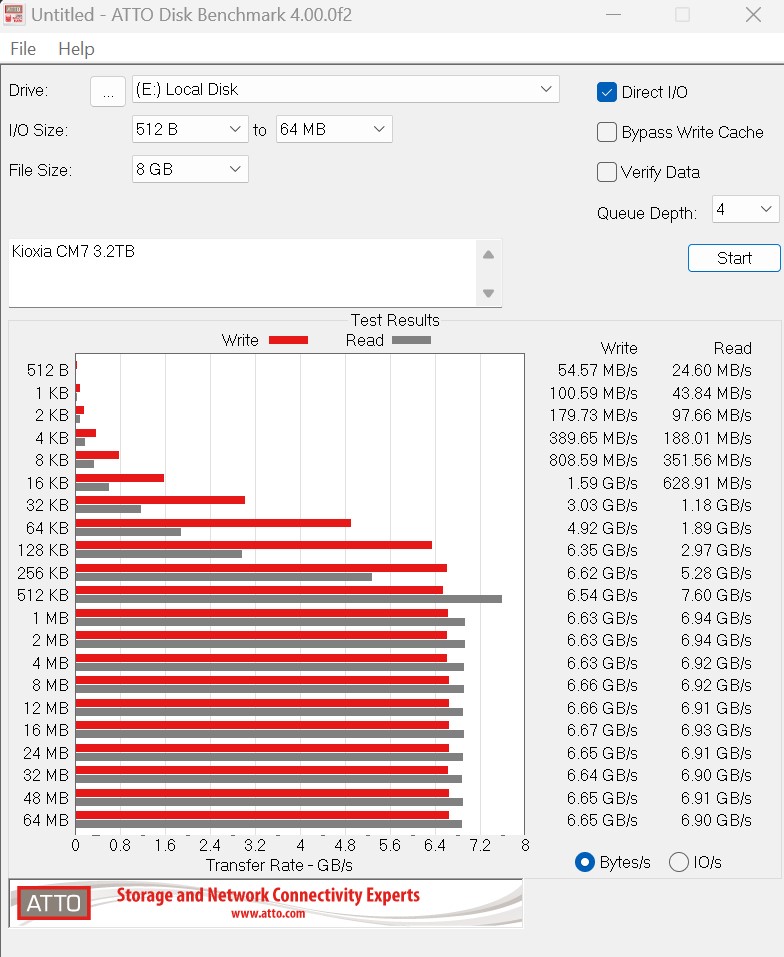

Here is the larger 8GB file size, which seems very small for a 30.72TB SSD.

ATTO seems to have enjoyed running at PCIe Gen4 speeds. We checked the drive, and even put it in a fancy PCIe Gen5 adapter specifically for drive testing. We put it in AMD systems and Intel systems, and still, this is what we saw with ATTO.

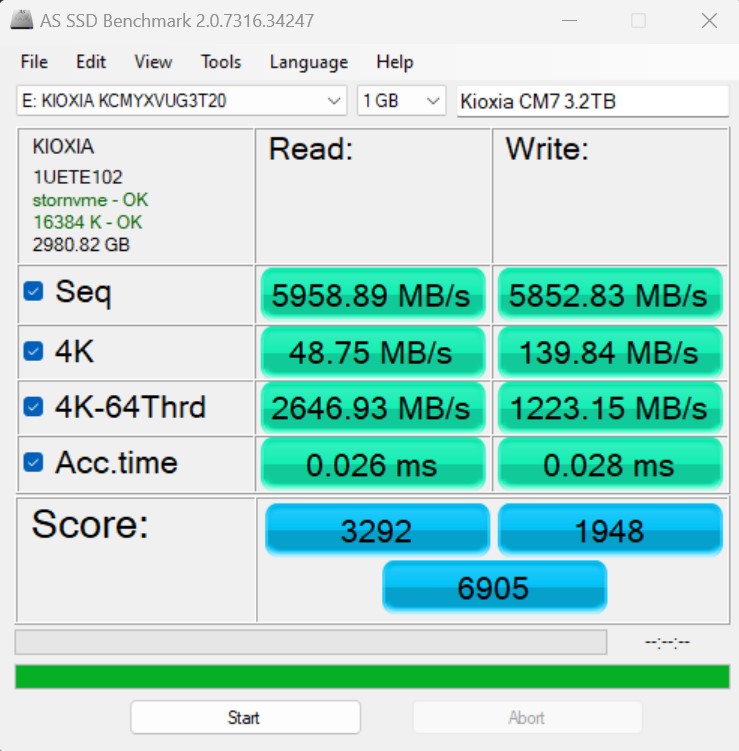

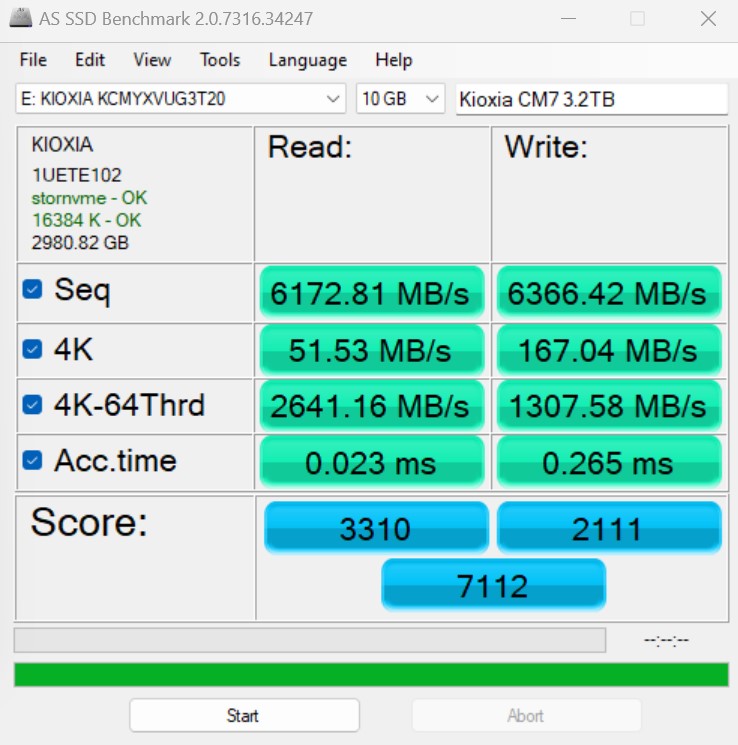

AS SSD Benchmark

AS SSD Benchmark is another good benchmark for testing SSDs. We run all three tests for our series. Like other utilities, it was run with both the default 1GB as well as a larger 10GB test set.

Here is the 10GB result:

Again, this is decent performance, but nothing like that 14.5GB/s CrystalDiskMark result.

Next, let us get to some higher-level performance figures.

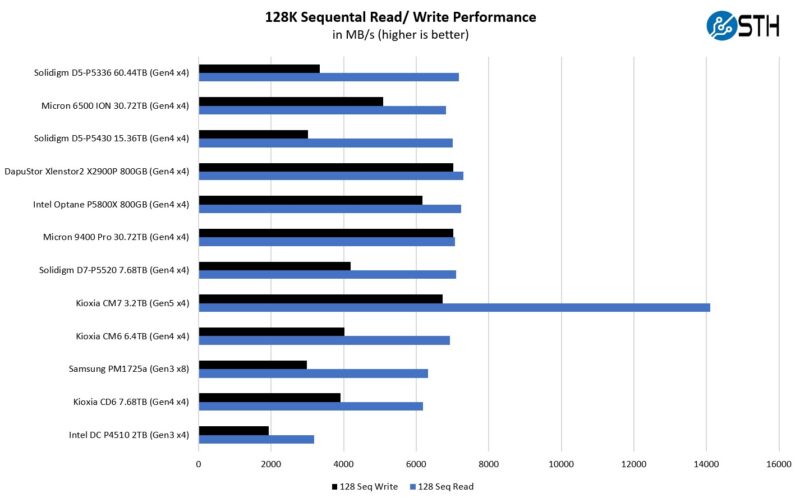

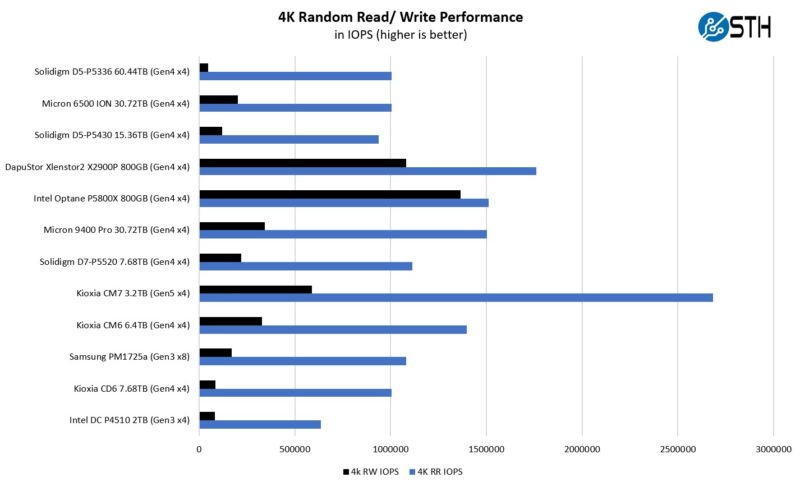

Kioxia CM7 3.2TB Performance

Our first test was to see sequential transfer rates and 4K random IOPS performance for the SSD. Please excuse the smaller-than-normal comparison set. In the next section, you will see why we have a reduced set. The main reason is that we swapped to a multi-architectural test lab. We actually tested these in 20 different processor architectures spanning PCIe Gen4 and Gen5. Still, we wanted to take a look at the performance of the drives.

Here is the 4K random IOPS chart:

Here, the Kioxia CM7 does well on the read IOPS and the sequential read performance. If you have a write-heavy application, then Kioxia and other vendors have write-optimized drives.

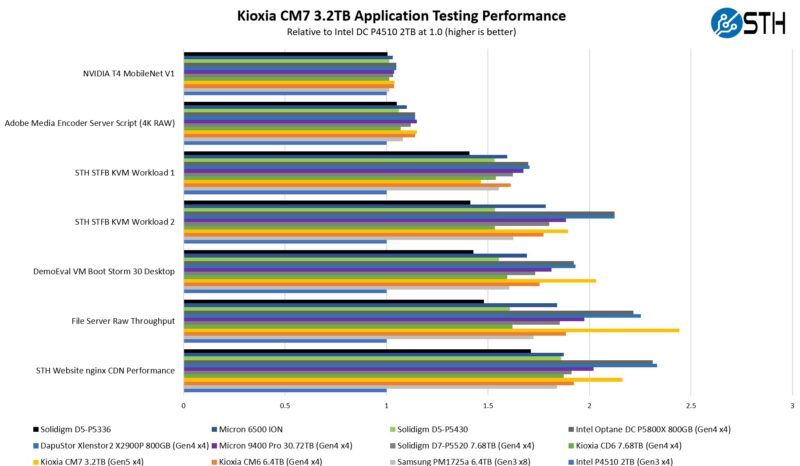

Kioxia CM7 3.2TB Application Performance Comparison

For our application testing performance, we are still using AMD EPYC. We have all of these working on x86 but we do not have all working on Arm and POWER9 yet so this is still an x86 workload.

As you can see, there are a lot of variabilities here in terms of how much impact the drive has on application performance. Let us go through and discuss the performance drivers.

On the NVIDIA T4 MobileNet V1 script, we see very little performance impact on the AI workload, but we see some. The key here is that the performance of the NVIDIA T4 mostly limits us, and storage is not the bottleneck. We have a NVIDIA L4 that we are going to use with an updated model in the future, but we are keeping the T4 inference as a common point. Here we can see a benefit to the newer drives in terms of performance, but it is not huge. That is part of the overall story. Most reviews of storage products are focused mostly on lines, and it may be exciting to see sequential throughput double in PCIe Gen3 to PCIe Gen4, but in many real workloads, the stress of a system is not solely in the storage.

Likewise, our Adobe Media Encoder script is timing copy to the drive, then the transcoding of the video file, followed by the transfer off of the drive. Here, we have a bigger impact because we have some larger sequential reads/ writes involved, the primary performance driver is the encoding speed. The key takeaway from these tests is that if you are mostly compute-limited but still need to go to storage for some parts of a workflow, the SSD can make a difference in the end-to-end workflow.

On the KVM virtualization testing, we see heavier reliance upon storage. The first KVM virtualization Workload 1 is more CPU-limited than Workload 2 or the VM Boot Storm workload, so we see strong performance, albeit not as much as the other two. These are KVM virtualization-based workloads where our client is testing how many VMs it can have online at a given time while completing work under the target SLA. Each VM is a self-contained worker. We know, based on our performance profiling, that Workload 2, due to the databases being used, actually scales better with fast storage and Optane PMem. At the same time, if the dataset is larger, PMem does not have the capacity to scale, and it is being discontinued as a technology. This profiling is also why we use Workload 1 in our CPU reviews. Overall the Kioxia CM7 did very well here.

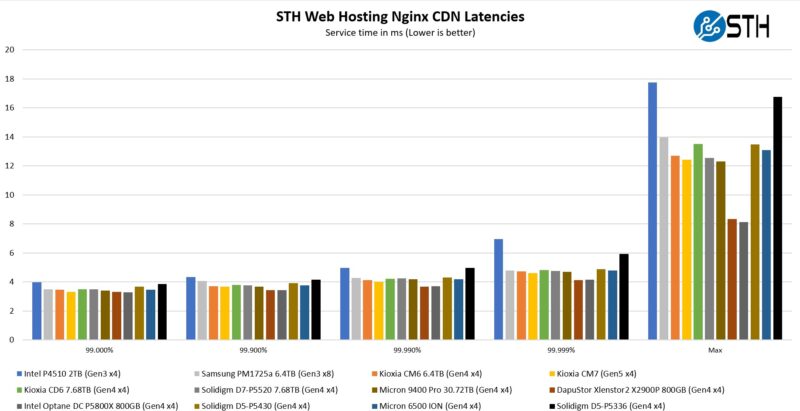

Moving to the file server and nginx CDN, we see better QoS and throughput from the Kioxia CM7, especially versus the previous generation CM6. It is not a 2x better performance as one might think going from PCIe Gen4 to Gen5, but it is better. On the nginx CDN test, we are using an old snapshot and access patterns from the STH website, with caching disabled, to show what the performance looks like in that case. Here is a quick look at the distribution:

Here we can see the Kioxia drive performs well on perhaps the most real-world benchmark we have.

Now, for the big project: we tested these drives using every PCIe Gen4 architecture and all the new PCIe Gen5 architectures we could find, and not just x86, nor even just servers that are available in the US.

The article states “We have all of these working on x86 but we do not have all working on Arm and POWER9 yet.” Since Power9 is PCIe4 and this is a PCIe5 drive, maybe Power10 would be a better choice.

How does it match up with the Crucial T700? I see that is conspicuously absent from the comparisons.

bifurcation on 16x slot. 4×4 gen4 Raid10 . quad nvme gen4 1tb get you 2tb storage… match these speeds. been here for a while…

T700 absent because consumer drives are not in the comparison – only DC/Ent drives.

And also, consumer drives are peaky in speeds, they don’t keep their performance for long because most of the performance comes from the dynamic SLC cache.

DC and enterprise drives do not do SLC caching and are far more consistent.

Partly because they have a lot of channels than consumer SSDs do.