Intel has new network adapters, at least in some respects, with the Intel E830 200GbE and E610 10GbE NICs. These two new series are expected to roll-out over 2025 and be Intel’s new PCIe Gen4 NICs moving forward. This is a launch where it really paid to read into the details versus the headline features.

Intel E830 200GbE and E610 10GbE NICs Launched

Intel says that its new Intel Ethernet E830 controllers have precision timing and security features while offering up to 200GbE of throughput, up from 100GbE on current models. You will notice that the image is of a dual SFP28 25GbE adapter, and there is a good reason for that as we will see a bit later.

The Intel Ethernet E610 series is a 10Gbase-T series that supports multi-gigabit speeds and up to 50% lower power. That 50% lower is the TDP difference between the Intel E610-XAT2 and the Intel X550-AT2 dual port 10Gbase-T adapters. There are also single and quad-port options like the E610-XT4 which might actually be a dual E610-XAT2 under the hood since the spec table says it supports bifurcation and a PCIe Gen4 x8 link split into x4 and x4.

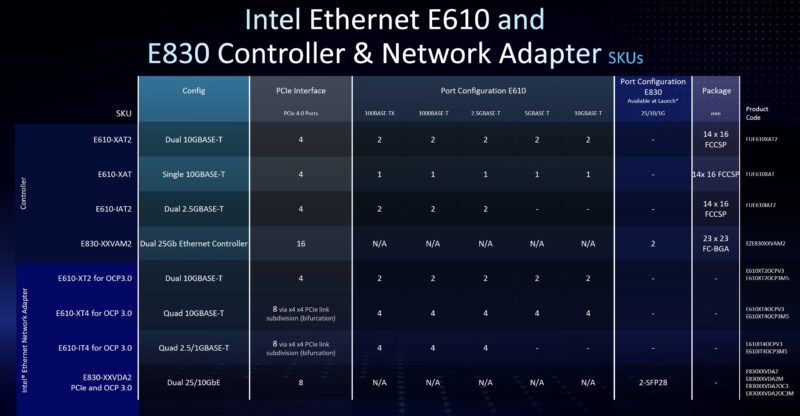

In terms of a network controller and adapter table, this is what Intel provided. There are single and dual 10Gbase-T controllers, as well as a dual 2.5GbE option. We have to give that 2.5GbE NIC a nod since we have The Ultimate Cheap Fanless 2.5GbE Switch Buyers Guide. In this the “PCIe 4.0 Ports” seems to actually mean lanes. Ports are something different in PCIe. Still, this is big since it would be the first multi-port 2.5GbE controller from Intel. Also, a single PCIe Gen4 lane should be enough to handle dual 2.5GbE as an example.

On the Intel E830, the first slide Intel showed said it was a 200GbE controller and much faster than the prvious generation. The actual first part is going to be the Intel E830-XXVDA2 which is a dual 25GbE part only. Intel says other models will follow, but at launch the E830 should only offer about half the bandwidth of the prior-generation Intel E810 not twice the bandwidth. Intel says additional configurations for the E830 will arrive later in 2025 so hopefully those bring 200GbE speeds.

Final Words

New 10GbE, 25GbE, and 2.5GbE adapters in 2025 are a bit surprising. Even launching a 200GbE adapter in 2025 feels a bit behind. The reason for this is quite interesting. In many servers, instead of onboard networking, there are OCP NIC 3.0 slots. So instead of a serer having dual 1GbE, dual 10Gbase-T or other options onboard, these lower-performance NICs are moving to an OCP NIC 3.0 slot. That seems to be the main push of the Xeon E610 and E830 right now. It does not seem to be going after the high-end of the market.

It is certainly not an understatement to say that after Intel lost its bid for Mellanox (we went into how Jensen and NVIDIA beat Bob Swan and Intel for the company in our Substack) Intel’s networking has never really recovered. NVIDIA has been shipping multiple 400GbE NICs while Intel is launching a 200GbE NIC (we covered Mellanox ConnectX-6 Dx at 200Gbps in 2019.)

>That seems to be the main push of the Xeon E610 and E830 right now.

Xeon?

>NVIDIA has been shipping multiple 400GbE NICs

They are shipping 800Gbs IB/2x400GbE with ConnectX-8. For example Lenovo’s offering it as an option 4XC7B03667.

Presumably the E610 offers SR-IOV? Would be great built into a MB!

Hopefully they can get the prices right. Intel’s networking gear has always been strongest when it came to drivers, but with nvidia now running away with the network side of things there too, theyre going to have to start competing on price imo.

I am sure that I am missing something, but it seems like there is a waste of PCIe 4.0 lanes here. 4x 10Gb (let alone the 4x 2.5Gb) are using 4×2 (PCIe 8x), when that could be handled by a single PCIe 4.0 x 4 instead (5GB/s for 10Gb x 4 which is well within the 8GB/s theoretical maximum for such a connection). I would love for valuable PCIe lanes to be better utilized. What am I missing?

I don’t care about Intel & Mellanox story, this is awesome for homelabbers.

E610 is great for legacy networks that can reach up to 10G. It is top ofthe line, should offer plenty of offload, lowest power consumption and interface that doesn’t waste precious modern PCIe lanes.

Same for E830, be it in 200GbE or 25GbE version.

200GbE version has additional appeal – it can be bifurcated into 4x50GbE or 8x25GbE and offer great solution for star configuration in which all clients connect to the same fileserver. No need for expensive, usually loud and power hungry switch.

Only remaining questions:

* what exactly are they capable of and what are internal improvements

* prices, availability, sources.

a bit off topic, but regarding the ‘Ultimate Cheap Fanless 2.5GbE Switch Buyers Guide’

I’ll post here because it would just get lost in the comment thread of that in itself excellent article

Users looking for 5 port fanless 2.5GbE switches should forget everything in that thread and look at the Unifi Flex Mini 2.5G. It will blow any of those chinese switches out of the water a thousand to one, including price-wise.

Once you get the Controller Software installed either in VM or on your desktop, you will just laugh at the crap from china.

Personally I’m super excited someone is innovating in this space and not just chasing down the AI workload support (NVIDIA has that locked up more or less anyway). Most workloads outside AI don’t need the cost overhead of 400/800Gb/s NICs. “Just” speeds of <=200G with modern PCIe, enterprise features (SRIOV, etc), and lean on the power budget, is absolutely perfect and should sell crazy well in so many use cases.

Hell even in the 10/25Gb space, which is still very useful at small/medium scale, hard to find a modern NIC that has the aforementioned features. Aquantia NICs *don't* cut it feature wise but that's about all that's out there on new efficient silicon nowadays.

Josh,

Maybe legacy PCIe compatibility?

Let’s just hope they don’t suck, like E810.

E810 sucks, because:

1. Single Port Performance is bad. It doesn’t even do 100G line rate (for 64b packets) in single-port mode (to be fair: Broadcom also doesn’t. Mellanox / Nvidia does!)

2. Dual-Port Performance is atrocious, that’s why they don’t even publish benchmarks of this mode, in opposite to Mellanox / Nvidia (look for “Test#3 NVIDIA ConnectX-6 Dx 2x 100GbE PCIe Gen4 Throughput at

Zero Packet Loss” in the official DPDK reports)

3. The E810-CQDA2 is the ONLY 100G NIC I’ve EVER encountered that does not support 40G on the QSFP. It supports 4x10G on the QSFPs, but not 40G. Wtf?

@Robin

What is more E810 is useless for Open vSwitch because their implementation of eSwitch switchdev is broken. Fortunately if you’re interested in that it’s spelled out in their documentation so you can chose any other vendor. They should just stop advertising this feature in specs if they are unable to fix it with software/firmware.

While E810 supports “enterprise features” the level of support is subpar. For example it’s unable to offload LACP when using SR-IOV, while Mellanox can with VF-LAG on the main interface, so that every VF can benefit from redundancy without having to bother with LACP itself (and the problems of it in VFs). That’s supported with just the standard mlx Linux driver, the more advanced vendor driver is not required.

The Intel ice driver’s documentation is full of caveats with broken features or features that do not work when other features are enabled. Yet for some reason Intel still is affected by a sentiment of having good networking, which might have been true 15 years ago, but definitely isn’t true now.

Honestly if Intel came out with a quality consumer grade multi-GbE (10/5/2.5/1/100) lower power controller that could be embedded on every motherboard, they would be back on top of the game. As it is now, anybody looking for faster than 2.5GbE for home setups is either using enterprise gear or Marvell/Aquantia. Intel has really ceded the consumer market as of late, and it’s kind of a bummer.

“The Intel Ethernet E610 series is a 10Gbase-T series that supports multi-gigabit speeds and up to 50% lower power. That 50% lower is the TDP difference between the Intel E610-XAT2 and the Intel X550-AT2 dual port 10Gbase-T adapters.”

Did Intel forget that the X710-T2L/T4L adapters exist in the space already? Yes, the X710 is PCIe 3.0 x8 and not PCIe 4.0, but why not compare to the X710 instead of the X550-AT2?

Actually, Intel did compare to the X710 controller in the E610 product brief, looks like Rohit got the information wrong. Here are the actual specs per Intel.

“With an absolute max power consumption of 4.47W, the E610-XAT2 controller represents a 53 percent reduction in max power compared to the Intel

Ethernet Controller X710-AT2 and an incredible 61 percent reduction compared to the Intel Ethernet Controller X550-AT2.”

I’ll stick to my mellanox cards. Better drivers, firmware, feature support, network standard, and also Intel cards always love to run at 100°C, even with cooling