To date, we have looked at a total of three NVIDIA GeForce RTX 3090 graphics cards, the NVIDIA RTX 3090 FE, ASUS ROG Strix RTX 3090 OC, and ZOTAC RTX 3090 Trinity. All three GPUs are extremely capable graphics cards by themselves. At the time of this review, we had two RTX 3090 GPUs here in the lab and wondered what type of performance numbers we might generate using an SLI/ NVLink multi-GPU configuration.

NVIDIA GeForce RTX 3000 series graphics cards are somewhat of a problem. NVIDIA has basically dropped SLI support with the RTX 3000 series graphics cards. Not only does NVIDIA drop support, but most of the graphics cards themselves do not come equipped with the ability to use an SLI or NVLink bridge. NVIDIA has been slowly phasing out the consumer edge connector for this. However, one card in the lineup includes a NVLink edge connector, the RTX 3090.

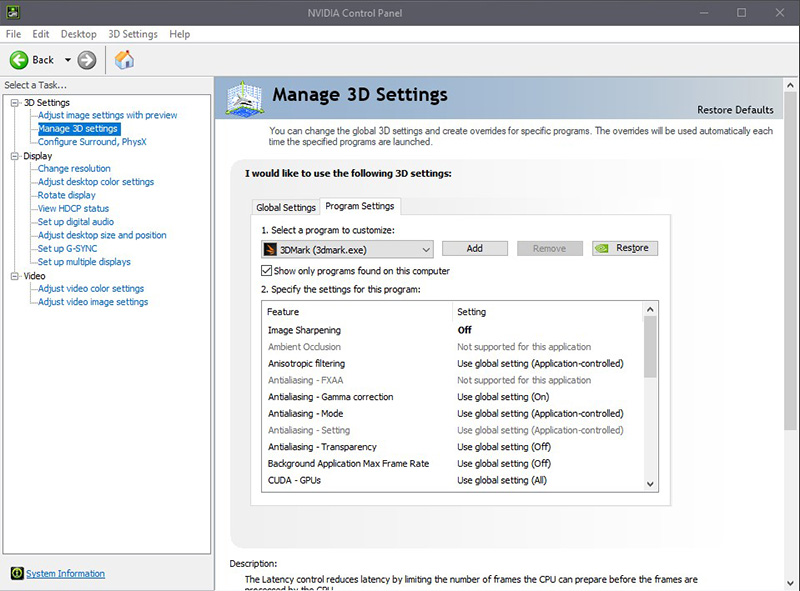

The latest NVIDIA drivers for the 3000 series of graphics cards will not show SLI options even if one has the NVLink bridge installed. When one opens up the NVIDIA Control Panel and drills down into Manage 3D settings/ Program Settings, the traditional SLI settings are not there with an NVLink bridge in place.

This phasing-out of SLI in favor of NVLink on higher-end GPUs makes a lot of sense. There is such a large delta between low-end GPUs and high-end data center GPUs that one can often scale in a single GPU system. Still, with NVIDIA seemingly phasing out SLI, we are no longer in the days that one can get two smaller cards for less than a larger card and get a value SLI setup. For our purposes, on the compute side we found that programs that can use multiple GPUs will result in stunning performance results that might very well make the added expense of using two NVIDIA 3000 series GPUs worth the effort.

We started off going down the multi-GPU adventure with two RTX 3090 graphics cards, the ASUS ROG Strix RTX 3090 OC and ZOTAC RTX 3090 Trinity. We did have a few problems to overcome. First, the ASUS ROG Strix RTX 3090 OC is simply huge, much like a large brick. A keen observer would also spot the size difference between the two cards hights. The ASUS ROG Strix RTX 3090 OC is 5.51″ tall, while the ZOTAC RTX 3090 Trinity is 4.75″ tall. We found our SLI bridge would not line up as the ZOTAC card was much lower. Being creative and only planning on running this configuration for these benchmarks, we ordered a PCIe x16 Extender Riser cable to lift the ZOTAC card. We now had a fully SLI configured system.

Clearly, this was not an optimal configuration to run long term, but it did serve for running our benchmarks.

We are also going to quickly note that even as GPU prices have drifted upward, GPU-to-GPU connectors have gone from free items in motherboard boxes to $75+ parts.

The last item to note. Being able to purchase even a single RTX 3090 is next to impossible unless you head down the second-hand market. Then getting two of the same RTX 3090 graphics is even more difficult. Normally we would want to run two NVIDIA RTX 3090’s that are the same, however, we have to recognize that is not the environment we are in. The prospect of having two different cards is simply a sign of the times. If this was a week or two where availability was tight, then we would understand. Instead, this has been a persistent challenge since the cards launched spanning many quarters.

We figured it might be worth the experiment to run our two RTX 3090 graphics cards in an NVLink configuration and see what we might get in our benchmarks. We can then gauge whether going down the multi-GPU (desktop compute) route is even worth it in the first place. For many use cases, the power and cooling requirements will make this impractical to have on the desktop. Having more than two GPUs in a desktop system these days is possible, but the practical aspect will force multi-GPU workloads to data centers and data cabinets.

The market has changed since we did our dual NVIDIA Titan RTX NVLink and dual NVIDIA Quadro RTX 8000 NVLink reviews. Part of that is due to NVIDIA’s product lineup while some change is simply due to market conditions.

Now, it is on to testing.

Darn son that’s the bossliest beast I ever did see!!!

DirectX 12 supports multi GPU but has to be enabled by the developers

NVlink was only available on the 2080 Turing cards – so only the high end SKU having it – nothing new. AMD’s solution is what again? Nothing.

in DX11 games – dual 2080Ti were a viable 4K 120fps setup – which I ran until I replaced them with a single 3090. 4K 144Hz all day in DX11.

I would imagine someone will put out a hack that fools the system into enabling 2 cards – even if not expressly enabled by the devs

2 different cards is about as ghetto as it gets and shows the (sub)standards of this site – Patrick’s AMD fanboyism is the hindrance to this site – used to check every day – but now check once a week – and still little new… even the jankiest of yootoob talking heads gets hardware to review.

As an aside, I hope ya’ll get a 3060 or 3080 TI to review.

The possibility of the crypto throttler affecting other compute workloads has me very worried… and STH’s testing is very compute focused.

Good review Will, ignore the fanboy whimpers any regulars knows how false his claims are.

Next up A6000?

Curious how close the 3090 is.

Nice review. I wonder how well the temperature can be controlled with a GPU water cooler.

Thanks for the review. It would be awesome to see how much the NVLink matters. I’m particularly interested for ML training – does the extra bandwidth help significantly, v.s. going through PCIe?

One huge issue is the pricing.

Many see the potential ML / DL Applications of the 3080 and their first idea is to stick them in Servers for professional use. The issue with that is that, in theory, this is a datacenter use of the GPU and thus violates the Nvidia Terms of Use…

AFAIK only Supermicro sells Servers equipped with the RTX 3080… why they are allowed to do that ? IDK… considering it is supermicro, they might just not care.

Here comes the pricing issue though. If you are offering your customers the bigger brands such as HPE and Dell EMC you are stuck with equipping your Servers with the high end datacenter GPUs such the V100S or A100 which cost 6-8 times as much as a RTX 3080 with similar ML perfomance … on paper.

Nvidia seems to be shooting themselfes in the foot with this. In addition to making my job annoying trying to convince customers that putting a RTX 3080 into their towers should be considered a bad idea.

I’ve got exactly the same 2 cards!

What specific riser did you use? I’d like to hear your recommendation before I purchase something random ;).

I have two 3090, same brand and connected with the original NVLink.

We acquired these for a heavy weight VR application done with Unreal Engine 4.26

We tested all the possible combinations but we couldn’t make them work together in VR. Only one GPU is taking the app. We checked with the Epic guys and they don’t have a clue. We contacted Nvidia technical support and the guys of the call center literally don’t have any page to use it against this extreme configuration We want to use one eye per GPU but it is not working. Anyone has an idea or knows something. Any help is more than welcome !!!!!

Dual gpu LOL Can’t believe people keep doing this hahaha

One of the problems I have run into with multiple Cards is that they do not seem to increase the overall GPU memory available. I have configurations where there are 2-4 cards in the computers and when I run applications, they only seem to think that I have 12 GB of GPU memory only. Even when 2 are NVLinked. I see the processes spread out amongst the cards, but for large data files, I see that my GPU footprint increase to around 11.5 – 11.7 GB and things slow down when this happens. Thus, GPU memory seems to be the bottle neck that I have been running into (12 GB on the 3080ti and the 2080ti).

While getting cards has been a little difficult, it isn’t that hard to source a pair of the same cards. I currently have 3 x rtx3090 ftw3 ultra cards and 1 3090 from an Alienware.

I learned long ago while running a pair of gtx1080ti’s, very few dev’s utilized the necessary products to benefit from SLI. One card just sat silently while the other worked. Perhaps they’ve improved. Only time will tell.

I have Asus Strix 3090s (x2) and with NVLink Bridge (4Slot) cant get Nvidia control panel to see that they are connected, no option to enable SLI/NVlink. using latest driver 512.59

was your bench using pcie4 hardware because this could bottleneck your performance.

you should be seeing an improvement in performance close to 1.5+ times performance which would put it above the 4090 what psu did you use?