Now that the new 4th Gen Intel Xeon Scalable processors have launched, we have new servers with new capabilities and designs to review. In this review, we have one of those servers, the ASUS RS-E11-RS12U which is a mainstream 1U server platform. In this review, we are going to see what makes this server different, and why the performance/ power of this server was slightly surprising given the other servers that we have reviewed.

ASUS RS700-E11-RS12U Hardware Overview

We are going to split the hardware overview into roughly two sections. We are first going to look outside the chassis in our external overview. We will then go into the system internals. We also have a video for this review that you can find here:

As always, we suggest watching this in its own browser, tab, or window for the best viewing experience. With that, let us get to the hardware.

ASUS RS700-E11-RS12U External Hardware Overview

The RS700-E11-RS12U is an 842.5mm or 33.2-inch deep server. That is a fairly reasonable depth for a 1U server these days. Servers are increasing memory channels and PCIe lanes, yet still fit into existing rack depth. The big difference is just how packed components are inside in modern servers.

The right side has miniature status LEDs and features like power buttons.

The left side rack ear has the ASUS branding.

On the front there are 12x 2.5″ drive bays. These 12x drive bays are designed to take NVMe SSDs, but they can also handle SATA via the Intel C741 PCH.

Because there are 12x drives, not 10x, and because of how hot modern processors run, a trade-off has to be made on the drive bays. The trays only screw in from the bottom of drives and are not toolless. Most 10x 2.5″ 1U chassis we test these days have tool-less trays, but that is a bigger challenge when airflow is a constraint.

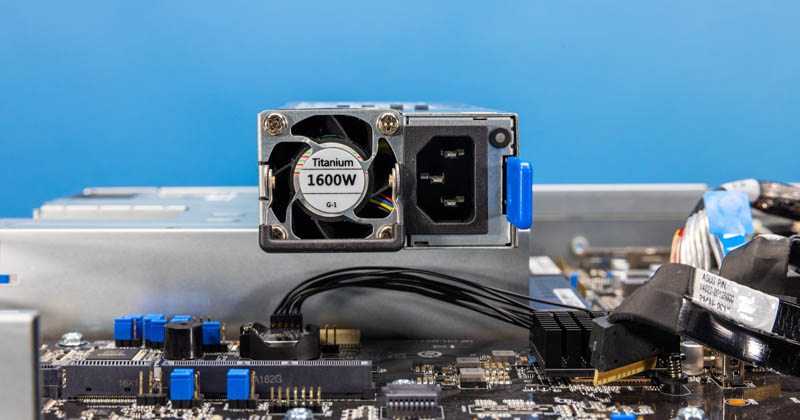

Moving to the rear, we have redundant power supplies.

ASUS has 1.6kW power supplies to handle the load a high-end dual Intel Xeon setup puts on the system plus all of the expansion card options, NVMe SSDs, and RAM. One nice feature is that these power supplies are 80Plus Titanium power supplies as ASUS focuses on efficiency in this type of system.

The center I/O block is distinctively ASUS. There is a power button on the rear as well as a POST code LCD so one can see if a server is operating properly, or perhaps is hanging at boot. There are also standard USB, VGA, and a management port.

One feature that ASUS has is that it provides two RJ45 ports for base networking. In our server, these are 10Gbase-T ports via an Intel X710 network controller. It is nice to have 10GbE onboard although for higher-end servers the high-speed networking is likely to be handled via add-in cards.

Aside from the center low profile slot, there is another riser with two full-height PCIe Gen5 slots.

Next, let us get inside the system to see how this is configured.

It’s amazing how fast the power consumption is going up in servers…My ancient 8-core per processor 1U HP DL 360 Gen9 in my homelab has 2x 500 Watt platinum power supplies (well also 8x 2.5″ SATA drive slots versus 12 slots in this new server and no on-board M.2 slots and less RAM slots).

So IF someone gave me one of these new beasts for my basement server lab, would my wife notice that I hijacked the electric dryer circuit? Hmmm.

@carl, you dont need 1600W PSUs to power these servers. honestly, i dont see a usecase when this server uses more than 600W, even with one GPU – i guess ASUS just put the best rated 1U PSU they can find

Data Center (DC) “(server room) Urban renewal” vs “(rack) Missing teeth”: When I first landed in a sea of ~4000 Xeon servers in 2011, until I powered-off my IT career in said facility in 2021, pre-existing racks went from 32 servers per rack to many less per rack (“missing teeth”).

Yes the cores per socket went from Xeon 4, 6, 8, 10, 12, Eypc Rome 32’s. And yes with each server upgrade I was getting more work done per server, but less servers per rack in the circa 2009 original server rooms in this corporate DC after maxing out power/cooling.

Yes we upped our power/cooling game at the 12 core Xeon era with immersion cooling, as we built out a new server room. My first group of vats had 104 servers (2U/4node chassis) per 52U vat…The next group of vats with the 32-core Romes, we could not fill (yes still more core per vat though)….So again losing ground on a real estate basis.

….

So do we just agree that as this hockey stick curve of server power usage grows quickly, we live with a growing “missing teeth” issue over upgrade cycles, perhaps start to look at 5 – 8 year “urban renewal” cycles (rebuild of the given server room’s power/cooling infrastructure at great expense) instead of 2010-ish perhaps 10 – 15 year cycles?

For anyone running their own data center, this will greatly effect their TCO spreadsheets.

@altmind… I am not sure why you can’t imagine it using more than 600w when it used much much more (+200w @70% load, +370w at peak) in the review, all without a gpu.

@Carl, I think there is room for innovation in the DC space, I don’t see the power/heat density changing and it is not exactly easy to “just run more power” to an existing DC let alone cooling.

Which leads to the current nominal way to get out of the “Urban renewal” vs “Missing teeth” dilemma as demand for compute rises as new compute devices power/cooling needs per unit rise: “Burn down more rain forest” (build more data centers as our cattle herd grows).

But I’m not sure everyplace wants to be Northern Virginia, nor want to devote a growing % of their energy grid to facilities that use a lot of power (thus requiring more hard-to-site power generation facilities).

As for “I think there is room for innovation in the DC space”, this seems to be a basic physics problem that I don’t see any solution for on the horizon. Hmmm.