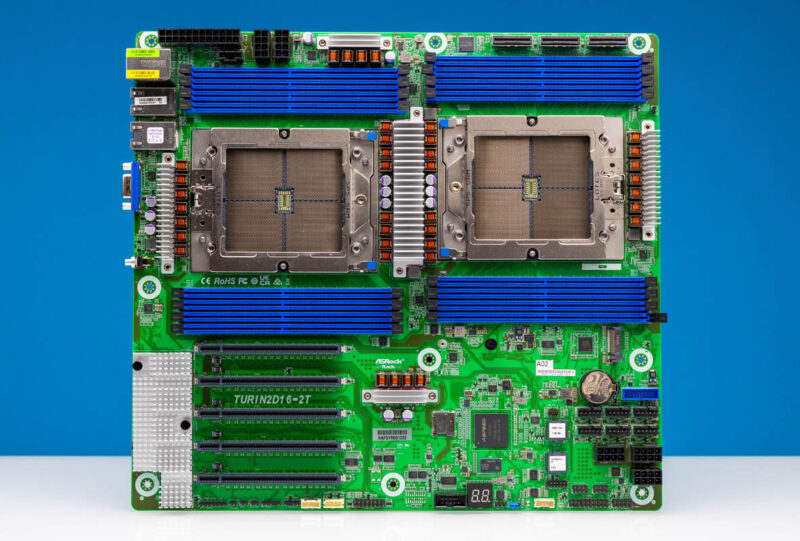

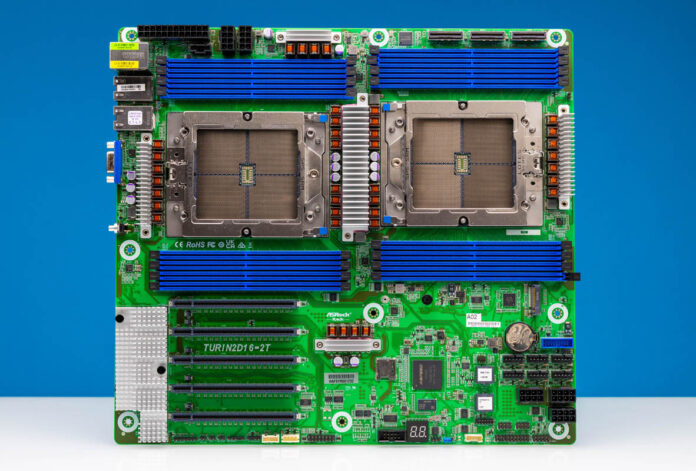

The ASRock Rack TURIN2D16-2T is a classic motherboard design, updated to handle today’s high-end server processors. With dual AMD EPYC 9005 and EPYC 9004 CPUs, sixteen DDR5 slots, and plenty of PCIe connectivity, this motherboard offers a lot in a familiar form factor. As an added bonus, we get 10Gbase-T as our base onboard networking as well. Perhaps the bigger story of this motherboard is just how far the ASRock Rack engineers had to push this platform. Let us get into it.

ASRock Rack TURIN2D16-2T Overview

If the motherboard looks large, that is because it is. This is an EEB 12″ x 13.2″ size motherboard that will fit in many server chassis, but it is also worth double-checking before you try building a system around the board.

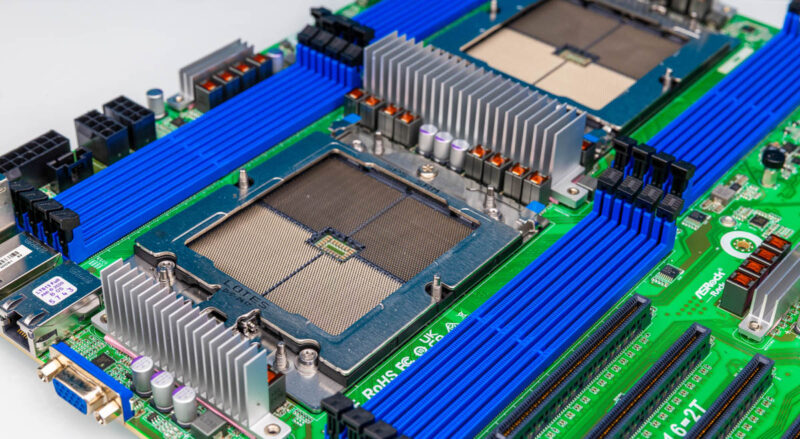

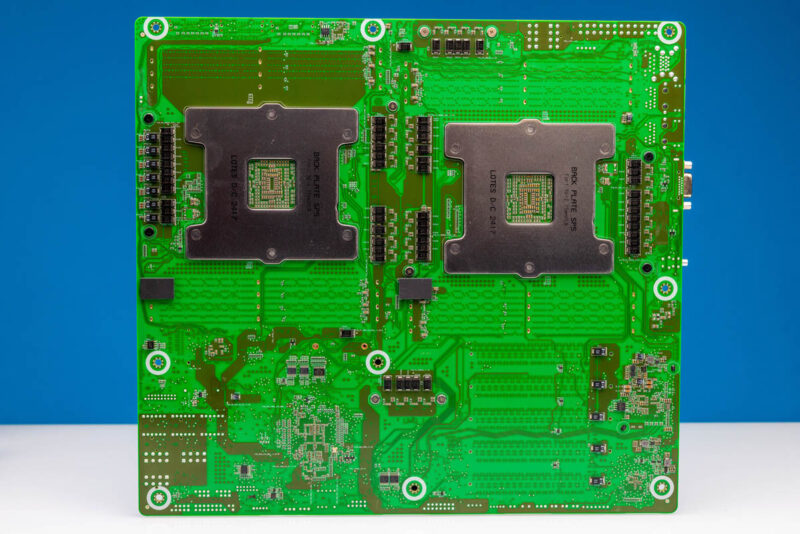

The big feature, by far, is the dual AMD SP5 sockets for AMD EPYC 9004 and EPYC 9005 series processors. If you want to build a server, and have lots of cores, then this is a great platform to do that in. Many of our readers will quickly spot both a challenge with fitting two SP5 sockets on a motherboard, and how ASRock Rack solved it.

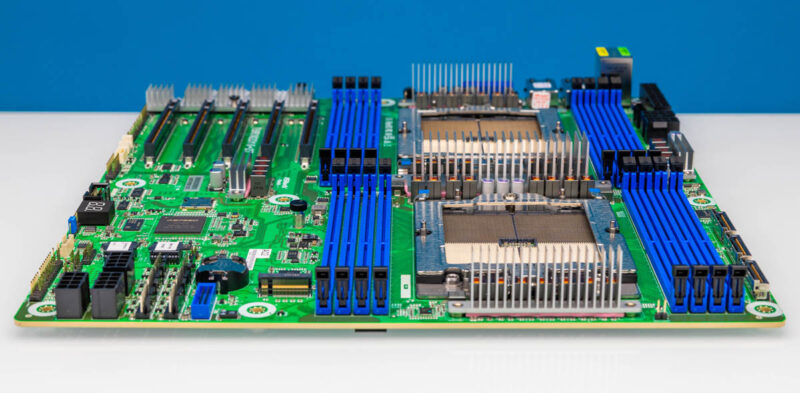

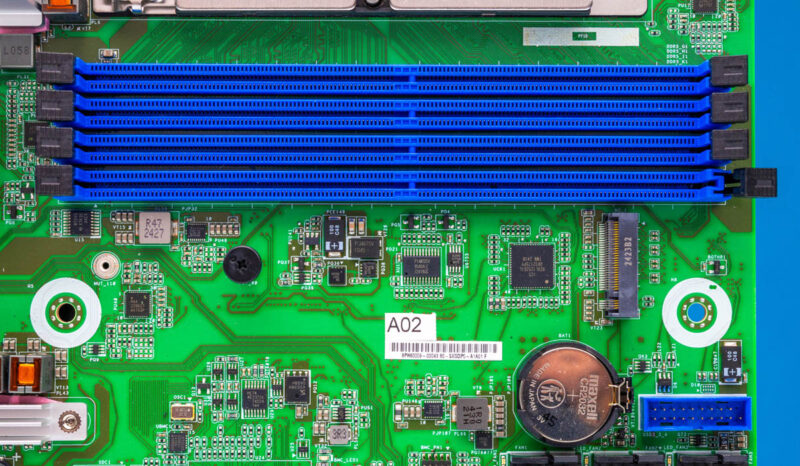

The AMD SP5 platform is huge. Two sockets can only fit side-by-side in a full width 19″ rack motherboard. Even then, that assumes only 12 of the possible 24 DDR5 DIMM slots are present. In a 12″ x 13.2″ motherboard, even two sockets with twelve DIMMs is not possible side-by-side. Instead, ASRock Rack has these SP5 sockets in serial configuration which makes a very good case for liquid cooling them with solutions like the Dynatron L32 1U Liquid Cooler. We had to use two of those on this platform. Even with that, only eight DDR5 DIMM slots are present for each CPU. EATX, EEB, and other traditional form factors were designed with much smaller CPUs in mind, and today’s modern CPUs are a challenge to fit. This takes effort by ASRock Rack’s engineers just to make this happen.

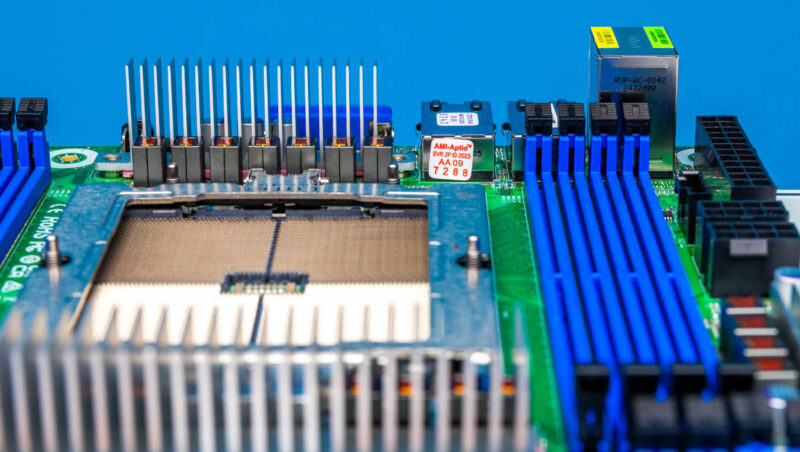

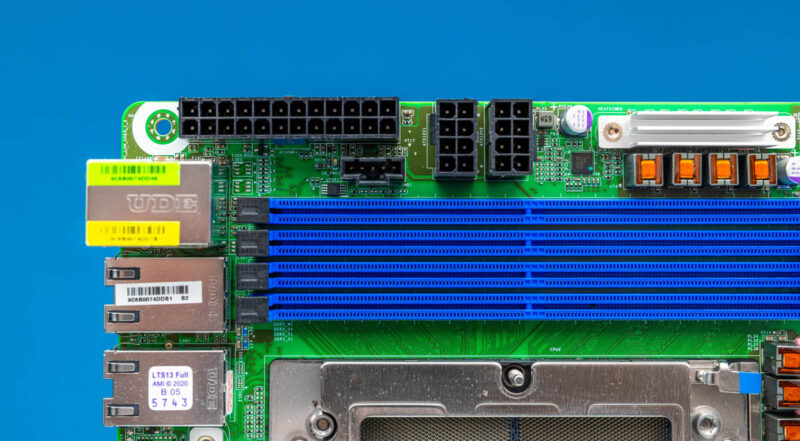

Some folks may have seen a red and white sticker in the above photo and wondered what that is, it is the AMI-Aptio license sticker.

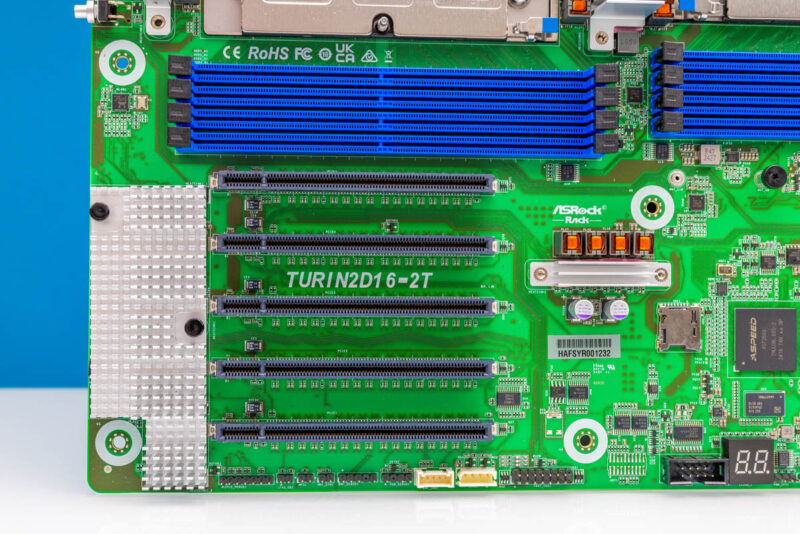

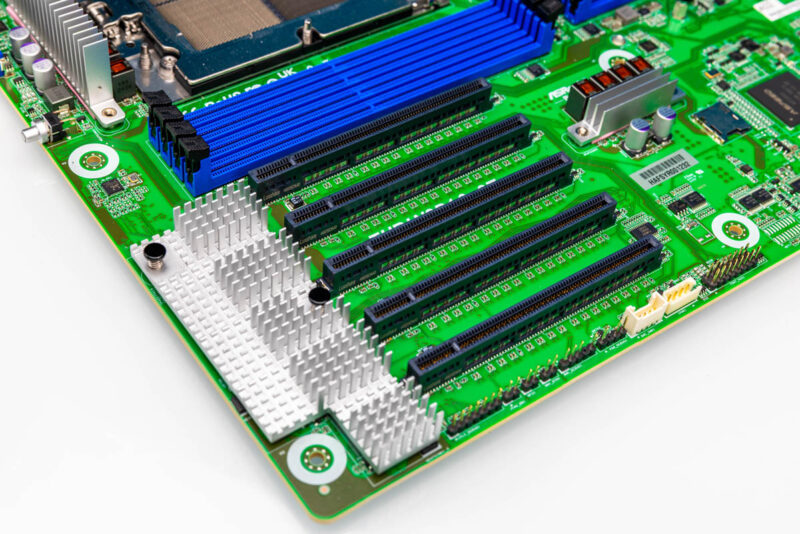

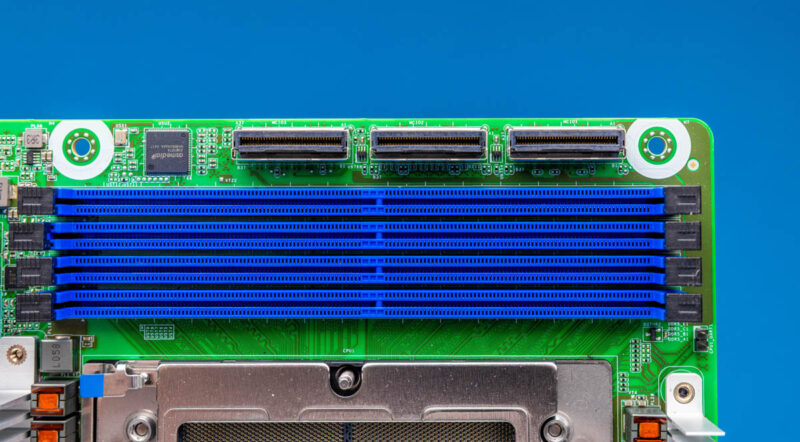

The next feature should have folks very excited. Even with massive CPU sockets and DDR5 DIMM slots, ASRock Rack still fit five PCIe x16 slots on this platform.

Slots 1-3 are PCIe Gen4 x16 and Slots 4 and 5 are PCIe Gen5 x16 and also support CXL 2.0. Not only are the CPU sockets and DIMM slots a challenge on modern motherboards, but also just routing PCIe Gen5 x16 signaling.

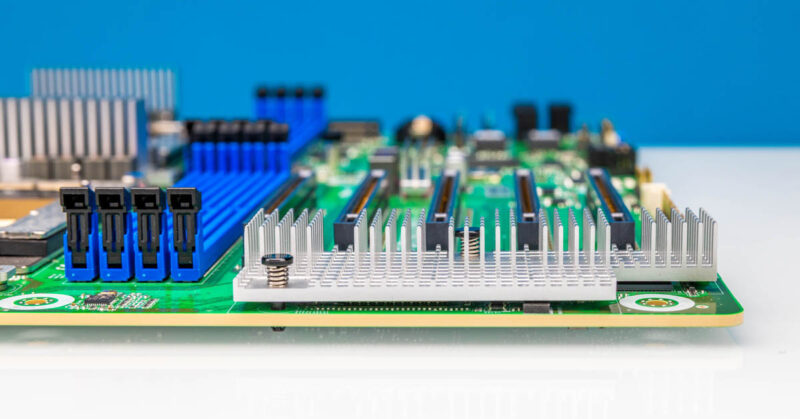

Under the heatsink we get our Intel X710 NIC powering our 10Gbase-T ports.

One item we wish ASRock Rack did was to add clear printed labels on the motherboard explaining which slot has PCIe Gen4 and Gen5 / CXL. Big labels that are easy to see help a year or two down the road if a card needs to be added to a system. Sometimes it is easier to have the relevant information printed next to the slot than it is for a technician to reference a manual.

On the bottom front we have our USB 3.0 front panel header, fan headers, power connections and some other I/O.

Just below the DIMMs for CPU1, there is an onboard M.2 slot. This is PCIe Gen4 x4 and supports M.2 2280 80mm drives as shown, or the standoff can be moved to the M.2 22110 110mm SSD position.

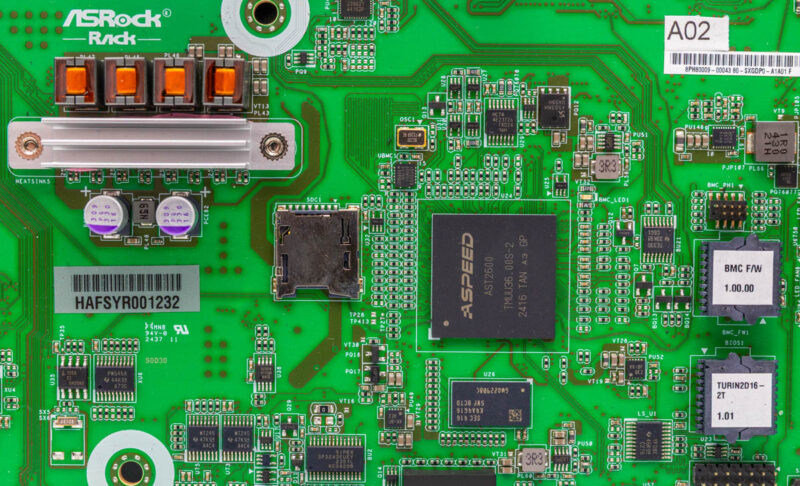

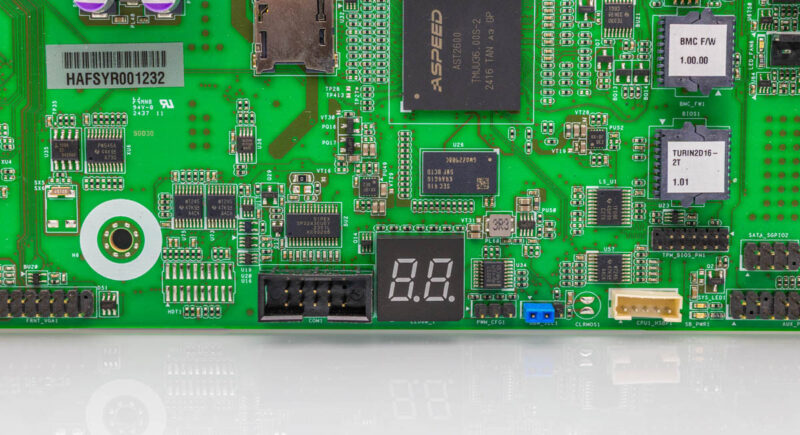

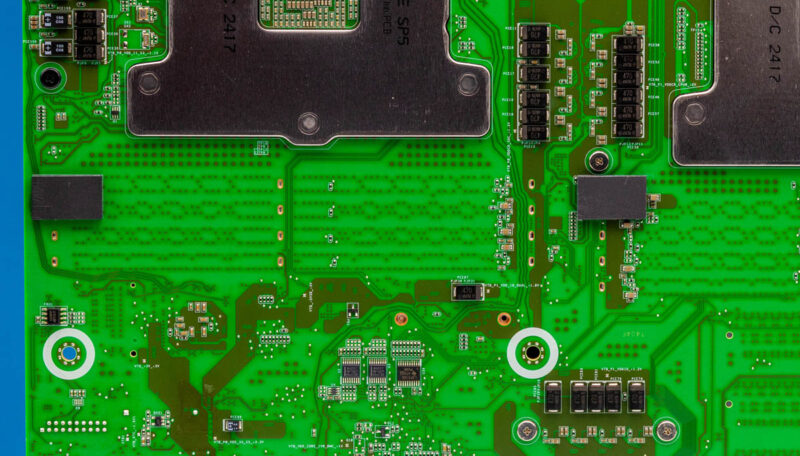

The management complex is in a different place than on many server motherboards where it is on the side with the rear I/O. Instead, is in the bottom/ middle portion of the motherboard. Here we can see the ASPEED AST2600 BMC and other bits like the DRAM, the microSD card reader, and the BMC firmware/ BIOS.

Below that we have a header for a COM port and a POST code status display.

Above CPU1, we get three MCIO x8 connectors. Two offer PCIe Gen5, CXL, and even SATA support. The third does not have the SATA support. With the right cables, this allows six PCIe Gen5 x4 NVMe SSDs or two NVMe SSDs and sixteen SATA devices.

Next to those MCIO connectors is an ASMedia ASM1047. This is a USB hub that provides USB connectivity to the rear ports even though it is on the front half of the motherboard.

The top rear has the ATX 24-pin and two 8-pin power inputs.

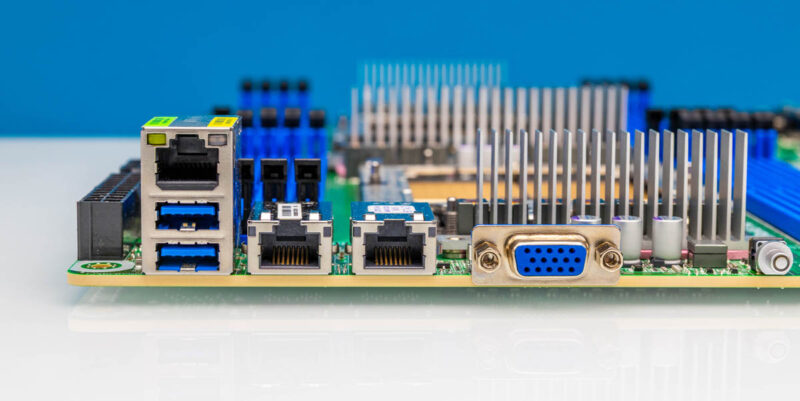

In terms of rear I/O we get the out-of-band management port above two USB Type-A ports and there is a legacy VGA port. The main two network ports are the Intel X710-AT2 10Gbase-T ports. The somewhat funny part about this rear I/O implementation in the motherboard is just how far away the Intel X710, ASPEED AST2600, and ASMedia ASM1047 are from the rear I/O. Normally we see components for the rear I/O next to the ports, ASRock Rack’s way to solve for higher priority high-speed lanes is to put the lower-speed rear I/O controllers all over the motherboard.

Normally we stop our hardware overview with the rear I/O. Here we found a very small but neat feature on the back of the motherboard.

If you look closely, there are two stand-off pads on the rear. These might be the smallest features, but after reviewing hundreds of motherboards, they stand out.

Next, let us get to the block diagram to see how ASRock Rack managed to fit all of this onto an EEB motherboard.

PCB tracing also responsible for MCIO and triple power connectors effectively swapping places?

Similar looking to the Gigabyte MZ73-LM2, except that’s 500W CPUs, 4 PCIe 5, 2 x 10Gb/s LAN, and 24 DIMMs, etc.

I have a Asrock GENOAD8X-2TBCM with an 84c Epyc, which recently died withing warrant time (doesn’t post anymore), but I had a supplier which tries to screw me over, and at the moment I have contacted my lawyer to get my money back. I have the feeling there was always something wrong with the GENOAD8X-2TBCM, I never could enter anything via remote management when the OS was booted, also I tried to install XCP-NG but not one of the NVMe were detected during install, so I installed proxmox on it which ran, but there were always random crashes.

This board looks like a great update, unfortunately it is still nowhere to be found in Europe, disadvantage would be that I need a second Epyc 84c, does anyone know if running an assymetric configuration is possible 32cEpyc and 84c Epyc, probably not right ? I’m a bit afraid if I buy this board that I also can not install XCP-NG, which is my favorite Hypervisor, since I have it running on a Ryzen 5950x and it runs for months without problems, if something crashed it is a VM but the hypervisor is running without hickups.

On the other hand I”m contenmplating a Supermicro H13SSL-NT, more memoryslots but less pcie 5.0 slots, don’t like the smaller ATX formfactor and with supermicro I had a bad experience of networkcards getting too hot and dying because of that, I’m running these systems in a FractalXL case with a lot of fans, but its not servergrade chassis with the accompanying air jetstream which Supermicro might be expecting for their boards.

@Rupi

Both CPUs need to be identical.

XCP-ng is running a relatively old kernel (4.19) so it might have problems with newer hardware. For NVMe there’s an option in BIOS to override the device firmware with BIOS firmware. It’s in Advanced -> NVMe Configuration. It might make the drives visible in older kernels.

@Kyle, ok, thanks for the info, I could run in with 1 CPU for a while although I can’t use my 100GB optical card until I buy another CPU, I expect them to drop with the release of the Turin CPU’s.

I even had that same problem installing XCP-ng 8.3, weird they would use an older kernel with their latest release. Regarding the NVME firmware, never overrode the default setting hopefully that was the problem. Of course I can’t try it on my broken board, but definitely will try it on this board if I decide to buy next week, will have to order it from the US, never did that probably some customs tariffs will be imposed, but here in Europe mainboard prices are rediculously high, so I guess that evens it out.

Hi

I’m not sure if folks noticed,

but it only has 3 QPI lanes between processors.

It does not support the higher Turins, 450w

it may have 5 PCIE slots but only 3 are at PCI Gen 5

the on board m.2 is PCI gen 4

and all in all with few exceptions on an extra port for 2 more 4x cxl or nvme express;

it seems a downgrade to the mZ73-LM0 Rev 2 or Rev 3 from gigabyte

Did I miss something?

Jay

Jay they’ve got an entire section devoted to that. That GB board’s got more DDR5 but 24 fewer PCIe lanes. Gen 4 or gen 5, you often need slots and lanes. If you’re buying a 32 core epyc then you might be connecting network and ssds but your not gonna fill 12ch 9 of 10 times.

Now THIS is what I referred to in my comments on the 48-DIMM motherboards STH covered a while back! THIS is what I talking about! Put one CPU and memory stack IN FRONT the other and then 48 DIMMs (24 per processor) won’t be such a problem to physically place and route. In fact, adapt THIS board a little by eliminating the PCIe 4.0 slots, re-arrange the other components (BMC, glue logic, I/O chips, VRMs, MCIO connections etc.) a bit and this board could even take 24 DIMMs at 12 per CPU! Re-interpret this board into a more typical board for a rackmount and the footprint will allow a full 64 DDR4 or DDR5 DIMMs, WITHOUT increasing the width OR needing to create a special proprietary board just for that purpose! You might even could get away with this even on dual LGA-7529 CPUs (which I think are a good bit larger than AMD’s socket SP5/LGA-6096)!! Not sure about the upcoming LGA-9324 for Diamond Rapids, though, as THAT CPU might be a bit too big for even this to work.

EDIT: Insert the word “was” between the “I” and “talking” into the line “THIS is what I talking about!”. I can’t proofread apparently.

@Harold, if you look at the Phoronix articles “DDR5 Memory Channel Scaling Performance With AMD EPYC 9004 Series” and “8 vs. 12 Channel DDR5-6000 Memory Performance With AMD 5th Gen EPYC” you’ll have your answer, filling the slots (and more of them) is like free cores; it can add significant speed ups, and allow you to use smaller DIMMs at a lower cost.

The 2x MCIO 8i is what’s replacing PCIe slots that are too far away (without retimers).

With that MB you still get 2 slots with one CPU, unlike the ASRock which requires 2 CPUs to be usable. I think we need to hear “why” the ASRock is “better”, not simply a declaration that it is; when available information suggests otherwise.